Introduction

MuleSoft is famous for disrupting the enterprise integration space through it’s scalable, extensible runtime engine and API-led connectivity approach to development. With their release of AnyPoint Platform, customers also gained a unified suite of tools for handling the entire API development lifecycle. When the need to alleviate the burden of infrastructure management from customers became clear, MuleSoft released their CloudHub solution to provide an integration platform as a service. More recently, in response to the increasing customer demand for multicloud deployments, MuleSoft released AnyPoint Runtime Fabric (RTF). In this series of blog articles, we will cover the following topics related to RTF:

- Overview

- Architecture

- Installation

- Deploying Applications (this article)

- Maintenance and Troubleshooting

In this article, we will cover three issues related to running applications in RTF:

- RTF Ingress

- Resource Allocation

- Mandatory Maven Repo (Exchange)

Hold on to your hats! There’s going to be a bit more technical detail in here than we’ve seen in previous articles in this series.

RTF Ingress

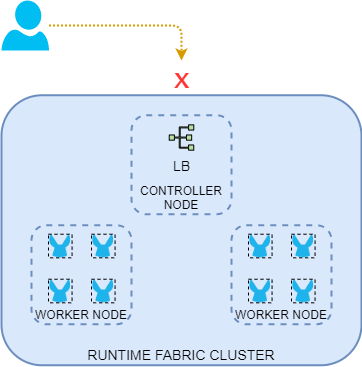

By default, applications and proxies deployed to RTF are not reachable from outside the cluster.

Figure 1 – Inbound traffic not allowed until RTF ingress is configured.

There are several reasons for this, but what concerns us is that to enable inbound traffic to the cluster, a TLS certificate must be loaded onto the controllers. This will be used to enable an ingress load-balancer for the underlying k8s cluster, providing TLS termination, load-balancing, and optionally enhanced security via AnyPoint Security Edge Policies.

Regarding this certificate, the Common Name (CN) of the certificate will determine how the ingress routes incoming requests to the Mule applications or proxies running in the cluster. From the MuleSoft documentation:

- If the CN contains a wildcard, the endpoint for each deployed application takes the form {app-name}.{common-name}

- If the CN does not contain a wildcard, the endpoint takes the form {common-name}/{app-name}

For example, with an ingress certificate with a CN of *.randomretail.com and an application name of orders, the application URL would be https://orders.randomretail.com. If we were to deploy that same application to an RTF with a certificate using CN of api.randomretail.com, then the application URL would look like https://api.randomretail.com/orders.

DNS must then be configured accordingly to resolve either the single CN or multiple CN’s to your controller IP or external load-balancer.

Once you have the certificate and DNS ready, loading it into the RTF cluster can be done via Runtime Manager > Runtime Fabrics > Inbound Traffic. There are a number of useful configuration options available here, so review the process documentation for details.

Resource Allocation

From the many benefits of RTF is its efficient allocation of resources for Mule applications.

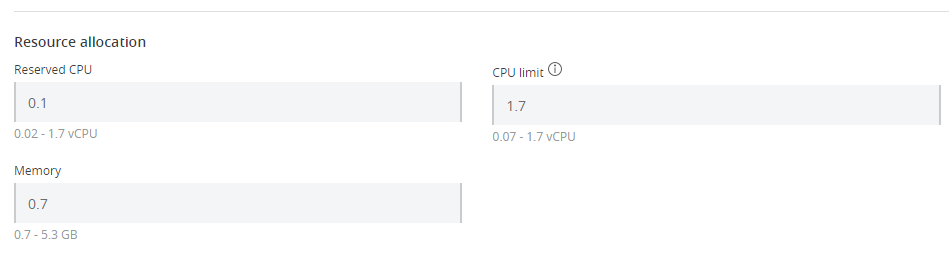

Figure 2 – Resources can allocated in a very granular fashion.

For individual applications, as few as 0.02 CPU cores and 0.7GB of RAM can be allocated, allowing for very efficient use of server resources and license units. However, customers should stay within the recommended limits, and also be aware of the impact on application startup times and performance. Furthermore, in the latest release of RTF, applications can be guaranteed a minimal amount of CPU, but still be allowed to burst to take advantage of available CPU on the worker node.

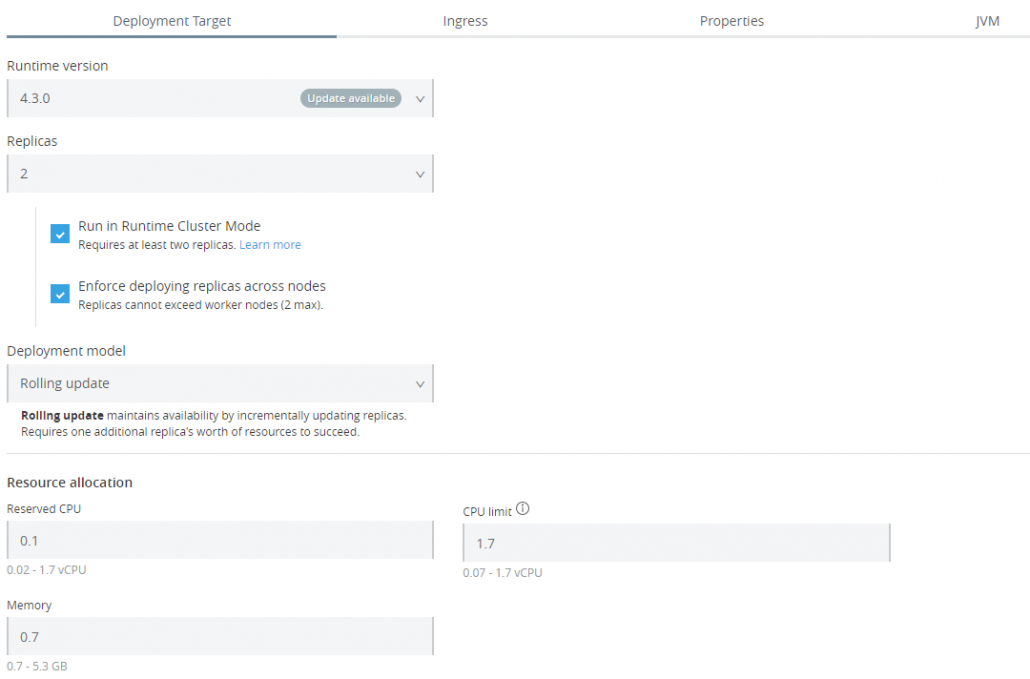

There is also great flexibility regarding high availability and orchestrating updates. Review the deployment documentation to get a good understanding of the available options.

Figure 3 – Lots of options for customizing application deployments

Mandatory Maven Repository

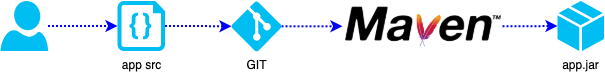

One of the unique things about RTF is the way applications are deployed to it. To understand the difference between RTF and other runtime options (e.g. CloudHub and customer-hosted runtimes), it is useful to see what they all have in common. Regardless of technology, it is best and most common practice to commit Mule application source code to version control (e.g. a GitHub repository) then build that source using Maven on a CI server such as Jenkins or Bamboo:

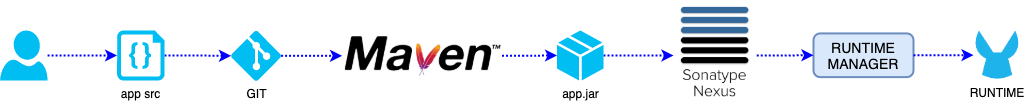

Figure 4 – Application JAR is built from source by Maven, usually running as part of a built job

At this point, the deployable JAR file is ready to deploy. In the case of CloudHub or customer-hosted runtimes, this could technically be deployed directly via the Runtime Manager API’s or dropping the JAR file in the Mule apps directory on a customer-hosted server. However, it is also a best practice to place a copy of that JAR in an Artifact repository such as Nexus or Artifactory or AnyPoint Exchange before deploying it in order to preserve the history of deployed artifacts and facilitate rollbacks:

Figure 5 – This pipeline includes an artifact repository

This would require two different maven deploy commands, one for the nexus repo and one for the runtime manager. It is highly recommended, but optional. Customers could easily bypass the repository and just run a single maven deploy to send the application to the runtime via the Runtime Manager API.

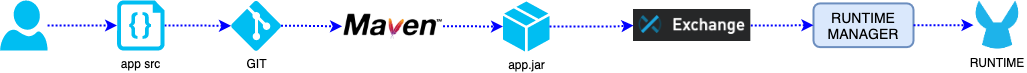

However, in the case of RTF, MuleSoft is enforcing the use of an artifact repository in order to facilitate the containerized deployments (and rollbacks). However, MuleSoft currently only supports using Exchange as the artifact repository for these RTF-bound JAR files. Therefore, a typical CI build job will include two maven commands, one for pushing the application JAR to Exchange, and another for deploying from Exchange to RTF via the Runtime Manager API.

Figure 6 – Application is built by Maven and pushed to Exchange, then a maven deploy is run to push that Exchange-hosted JAR to RTF

This is not obvious for those deploying via the Runtime Manager UI via the AnyPoint Platform web interface, but it is important to understand when preparing to use RTF in a production-ready capacity. For more info regarding the available configuration options for deployable applications and maven syntax, see the RTF and Mule runtime documentation.

Conclusion

Hopefully this discussion of several issues related to deploying applications to Runtime Fabric has been informative. In the next article, we will examine the various tools available for monitoring, troubleshooting, and other maintenance activities.